ESP32 AI Text-to-Speech: Cloud-Powered Voice Output

2026-02-04 | By Rinme Tom

License: General Public License ESP32

Introduction

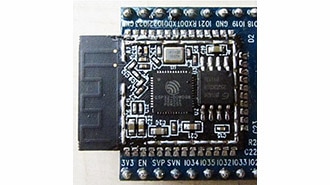

Speech output is a foundational feature in modern technology — used in voice assistants, accessibility tools, alerts, and interactive gadgets. While computers and smartphones handle text-to-speech (TTS) locally thanks to powerful processors and ample memory, microcontrollers like the ESP32 face unique challenges. Limited RAM, modest clock speeds, and minimal onboard audio hardware make native speech synthesis impractical on these platforms. The solution? Offload the speech generation to cloud-based AI services, then stream the resulting audio back to your ESP32 for playback. This guide walks through every step of building an ESP32 AI-based text-to-speech system using Wit.ai and widely available audio hardware — perfect for DIYers, makers, and embedded developers.

Why Use AI-Enabled TTS with ESP32

Text-to-speech is more involved than simply “reading words aloud.” Effective TTS includes converting numbers and symbols into spoken language, analyzing phonetics, determining correct word stress and pauses, and generating smooth, natural-sounding audio. On a high-end system, these tasks run efficiently, but on a resource-limited microcontroller, attempting the same workload locally would quickly exceed capabilities and negatively impact performance.

To overcome these restrictions, this project uses an online AI service (Wit.ai) to handle the heavy lifting of text processing and speech synthesis. The workflow looks like this:

The ESP32 sends your text to Wit.ai via Wi-Fi.

Wit.ai converts the text into a speech audio file (e.g., MP3 or WAV).

The ESP32 streams and plays the received audio using an external amplifier and speaker.

This approach lets you add high-quality, natural voice output to embedded projects without taxing the microcontroller.

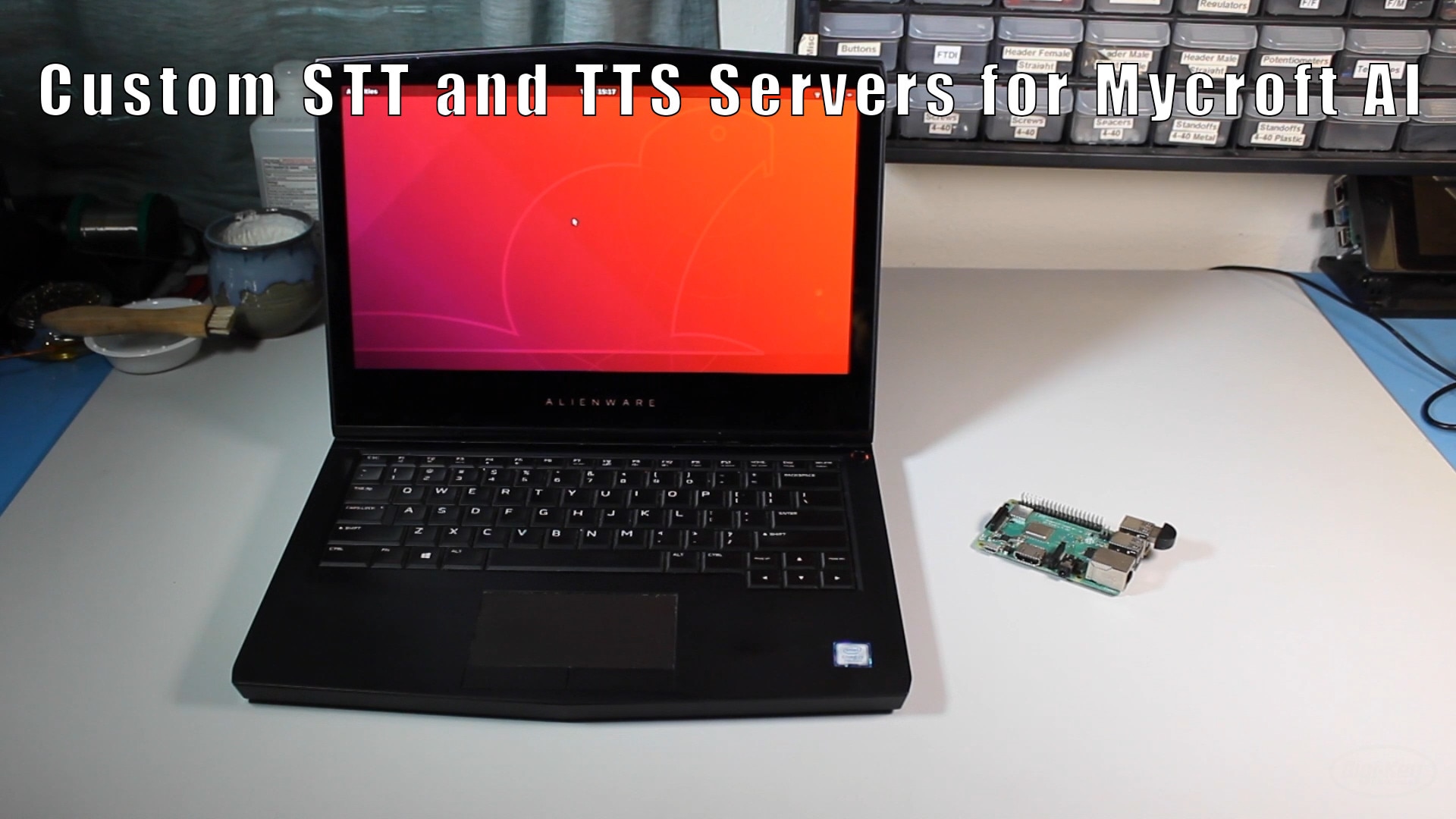

System Architecture Overview

Before diving into hardware and software, let’s examine how the full system operates:

User input – Text is entered through the serial monitor or firmware logic.

ESP32 Wi-Fi connection – The microcontroller connects to a local wireless network.

Cloud API request – The ESP32 sends the text string to the Wit.ai TTS endpoint.

Speech synthesis – Wit.ai converts the text into spoken audio (MP3 or WAV stream).

Audio streaming – The ESP32 receives the audio stream in chunks.

Digital audio output (I2S) – The ESP32 sends decoded PCM audio to an external DAC/amplifier.

Sound playback – A connected speaker outputs the speech in real time.

This architecture keeps memory usage low, minimizes latency, and ensures consistent speech quality.

Configuring Your Wit.ai Account

The ESP32 requires access to the Wit.ai API to send text and receive speech audio. Follow these steps:

Create a Wit.ai account.

Sign up through email or a social login.

Create a new application.

Choose a meaningful name and select your primary language.

Retrieve the API token.

Navigate to Settings → HTTP API to find your server access token.

Secure the token.

Store it securely (e.g., environment variables) and avoid hard-coding it directly in code.

Installing Libraries & Preparing Code

To simplify integration, an open-source WitAITTS library is available via the Arduino Library Manager:

Open the Arduino IDE and search for “WitAITTS.”

Install the library.

Load the example sketch (File → Examples → WitAITTS → ESP32_Basic).

Replace placeholder values with your Wi-Fi credentials and Wit.ai token.

The library handles communication with Wit.ai, streaming audio data, and abstracting complex details so you can focus on your project code.

Streaming and Playback

Once the sketch is uploaded:

Open the serial monitor to track connection status.

Type sentences and hit Enter.

The ESP32 sends each sentence to Wit.ai and begins streaming back processed speech.

Audio is streamed rather than downloaded in one file, which reduces memory requirements and improves responsiveness. Playback quality depends on network stability, speaker quality, and power supply noise.

Conclusion

This ESP32 Text to Speech Using AI project lets makers add AI-enhanced text-to-speech output to ESP32-powered designs with minimal hardware and software complexity. By delegating speech synthesis to a cloud service like Wit.ai, you maximize speech quality and keep embedded firmware lightweight. Whether you’re building voice announcements, IoT feedback systems, or interactive gadgets, this approach scales from hobby prototypes to production-ready devices.